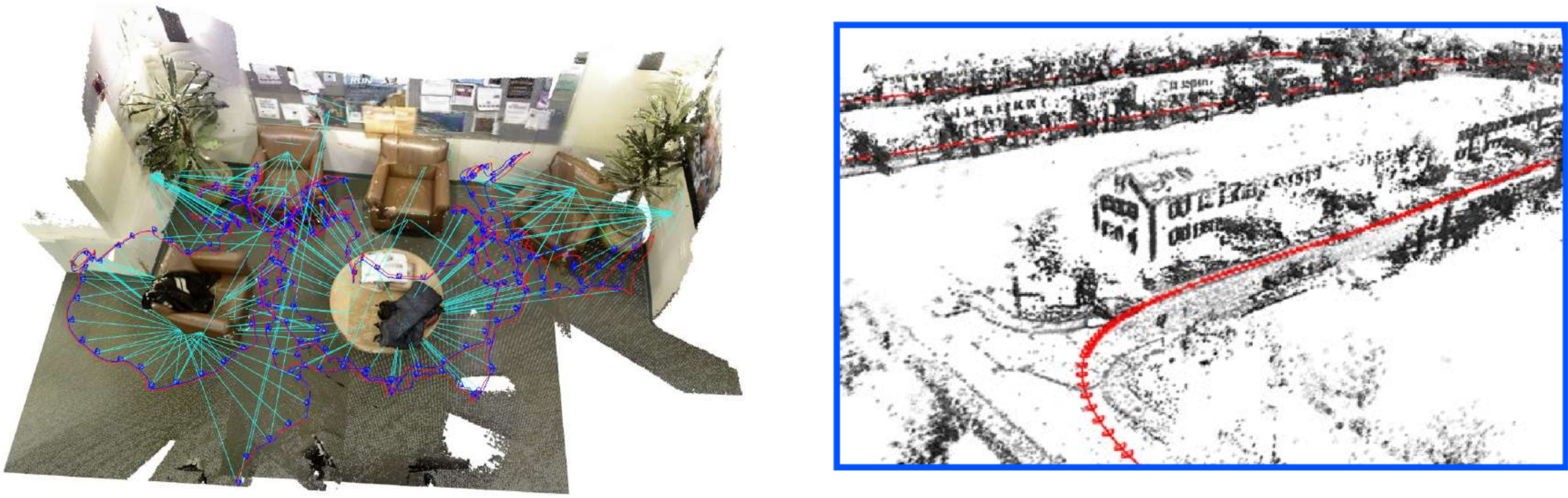

Left: RGB-D SLAM with maps that combine keyframes with planar objects [ ]. Right: Monocular visual odometry using deep-learning based monocular depth estimation [ ].

Simultaneous localization and mapping or structure-from-motion is an important subfield in computer vision and robotics. It enables the 3D reconstruction of unknown environments from moving cameras and allows for localizing in the built map. We investigate direct visual SLAM approaches that enable robots to acquire 3D maps of the environment and localize in the maps in real-time. For instance, in [ ], we combine keyframe-based direct SLAM with tracking and mapping of planar objects in a single optimization framework. The addition of planar objects provides semantic information in the map, reduces drift during camera tracking and improves the estimated map and trajectory estimate. Deep Virtual Stereo Odometry (DVSO, [ ]) is a hybrid direct method that uses a learned model for monocular depth prediction as prior for direct monocular SLAM in a classical optimization pipeline. The model predicts dense depth from single images which is used to initialize depth and regularize the depth estimation during optimization.

Cameras record images with a limited framerate (e.g., 30Hz), and a reference image or 3D reconstruction is required to estimate the motion relative to these references. Inertial measurement units well complement cameras with their higher frame-rate measurements which directly sense linear accelerations and rotational velocities in 3 axes. Visual-inertial SLAM approaches seek to combine both sensing modalities for more robust and accurate tracking than each sensing modality alone. In [ ] we present a novel approach to visual-inertial SLAM which estimates camera motion and 3D reconstruction in a two-layer hierarchical pipeline. On the lower visual-inertial odometry layer, camera motion, 3D reconstruction of keypoints, and IMU sensor biases are estimated in a sliding window approach for a set of recent frames and selected keyframes. Older frames are marginalized from the optimization window to keep their estimates as prior information. The global mapping layer optimizes all key frame poses and a 3D reconstruction of keypoints using probabilistic summaries of the accumulated estimates and sensor information between the keyframes from the visual odometry layer. With loop-closing constraints, the mapping layer can compensate for drift accumulated in the visual odometry layer and achieve a more accurate overall estimate of trajectory and map.