In this project, we investigate methods for giving intelligent robots an understanding and a sense of physics about their environment. By this, the robots could obtain plausible 3D scene reconstructions and predict the outcome of interactions with the environment. In Zhu et al. [ ], we present a method which learns to embed videos using a variational recurrent neural network into a latent state representation which can be interpreted for dynamics and appearance quantities. We optimize the latent codes of the network using total correlation objectives to improve the disentanglement of the learned latent state representation. We also study the effects of partial supervision to recover latents that model dynamics properties.

In Kandukuri et al. [ ] we embed a differentiable physics simulation into a deep neural network which we train on video sequences in a self-supervised way. The differentiable physics engine is based on a time-stepping velocity- and constraint-based formulation and can model 3D geometric shape primitives such as boxes, cylinders and spheres. It includes collision, friction and joint constraints. The predicted velocity in each time step is found by solving a linear complementarity problem which can be differentiated at the solution. While a CNN encoder embeds images into the physical positions of objects, the dynamics is propagated by the differentiable physics simulation. The method learns the parameters of the CNN and can concurrently recover physical paramters such as mass or friction. The inductive bias by the physics simulation achieves better accuracy and generalization than a pure neural network based approach in prediction performance of future states and video frames.

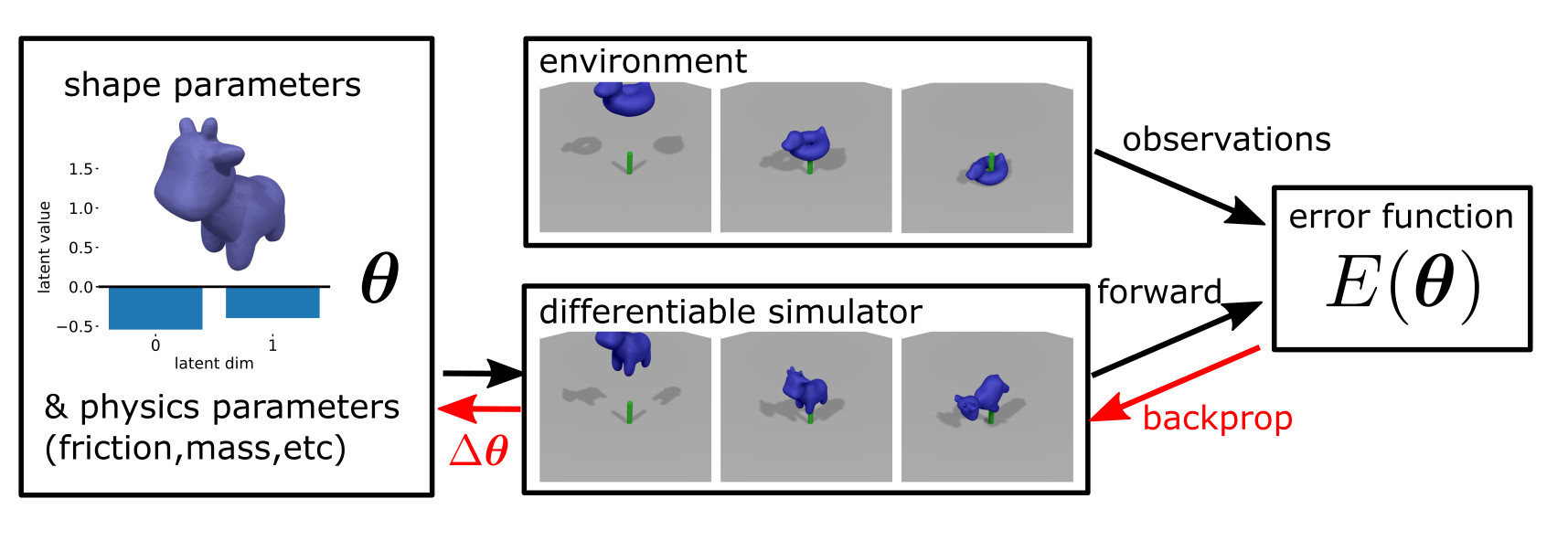

We also propose a differentiable physics simulation coined DiffSDFSim [ ] which can simulate the motion and collisions of arbitrary watertight shapes. We represent the shapes using signed distance functions. We demonstrate that shape parameters can be recovered together with physics parameters like mass and friction or external forces on the objects from sample trajectories or depth image sequences in several scenarios such as objects bouncing against a wall, falling on another object, or wrenches applied on the objects.